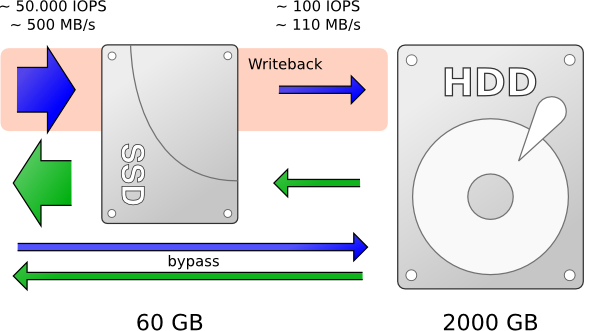

Linux flash cache can substantially improved performance of mechanical hard disk drive or any block device by dynamically migrating some of its data to a faster, smaller SSD flash device. The default is writethrough mode which is the safest one (unless you mirror flash), but which on the other hand doesn’t save the backing device (HDD) from many random write operations. Another mode is writeback which keeps the data in the cache (SSD) and once in a while writes them back to the backing device.

http://blog-vpodzime.rhcloud.com/?p=45

http://info.varnish-software.com/blog/accelerating-your-hdd-dm-cache-or-bcache

bcache (Linux 3.10):

https://coelhorjc.wordpress.com/2015/03/25/how-to-cache-hdds-with-ssds-using-bcache/

https://bcache.evilpiepirate.org/

https://evilpiepirate.org/git/linux-bcache.git/tree/Documentation/bcache.txt

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 | ## install $ sudo yum install bcache-tools | sudo apt-get install bcache-tools ## using, assing HDD is /dev/sda and an SSD is /dev/sdb # wipe (from util-linux) devices $ wipefs -a /dev/sda1 ; wipefs -a /dev/sdb1 # format backup/hdd and cache/ssd devices $ make-bcache -B /dev/sda1 ; make-bcache -C /dev/sdb1 # attach the cache device to our bcache device 'bcache0' $ echo C_Set_UUID_VALUE > /sys/block/bcache0/bcache/attach # create and mount fs $ mkfs.ext4 /dev/bcache0 $ mount /dev/bcache0 /mnt # optionally use faster writeback (instead of default writethrough) $ echo writeback > /sys/block/bcache0/bcache/cache_mode # same but permanently $ echo /dev/sda1 > /sys/fs/bcache/register # monitor $ bcache-status -s |

dm-cache (Linux 3.9):

https://www.kernel.org/doc/Documentation/device-mapper/cache.txt

https://www.kernel.org/doc/Documentation/device-mapper/cache-policies.txt

http://blog.kylemanna.com/linux/2013/06/30/ssd-caching-using-dmcache-tutorial/

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 | #!/bin/bash clear # Cache Variables source_device="/dev/dm-0" cache_device="/dev/sdc2" cache_policy="lru" cache_mode="wt" cache_block_size="4096" cache_name="cache1" # FIO Variables fio_blocksize="4K" file_size="20G" iodepth="8" numjob="4" runtime="300" output_path="/root/eio_perf/dmcache/ram_8G_50G_wt_100_read_0_write_4K_4K" hits="90" mkdir -p ${output_path} echo "Output path '${output_path}' is created" # Create a cache echo "Creating a cache" #eio_cli create -d ${source_device} -s ${cache_device} -p ${cache_policy} -m ${cache_mode} -b ${cache_block_size} -c ${cache_name} dmsetup create ${cache_name} --table '0 195309568 cache /dev/sdc2 /dev/sdc1 /dev/sdb1 512 1 writethrough default 0' echo dmsetup table echo # Warm up the cache echo "${hit} % Hit_Warm_Up_${fio_blocksize}" fio --direct=1 --size=${hit}% --filesize=${file_size} --blocksize=${fio_blocksize} --ioengine=libaio --rw=rw --rwmixread=100 --rwmixwrite=0 --iodepth=${iodepth} --filename=${source_device} --name=${hit}_Hit_${fio_blocksize}_WarmUp --output=/tmp/WarmUp.txt #Run the test echo "$hit % Hit_${fio_blocksize} ${source_device}" fio --direct=1 --size=100% --filesize=${file_size} --blocksize=${fio_blocksize} --ioengine=libaio --rw=randrw --rwmixread=90 --rwmixwrite=10 --iodepth=${iodepth} --numjob=${numjob} --group_reporting --filename=${source_device} --name=${hit}_Hit_${fio_blocksize} --random_distribution=zipf:1.2 --output=${output_path}/${hit}_Hit_${fio_blocksize}.txt # Delete the cache echo "Deleting the cache" dmsetup remove ${cache_name} # Wiping out stale metadata of dm device, if any. Not doing this will cause cache hits during warmup phase on successive # runs, contrary to our expectation. echo "Wiping out cache metadata" dd if=/dev/zero of=/dev/sdc2 oflag=direct bs=1M count=1 dd if=/dev/zero of=/dev/sdc1 oflag=direct bs=1M count=1 |

Performance:

https://www.redhat.com/archives/dm-devel/2013-June/msg00026.html

https://github.com/stec-inc/EnhanceIO/wiki/PERFORMANCE-COMPARISON-AMONG-dm-cache,-bcache-and-EnhanceIO

https://www.redhat.com/en/blog/improving-read-performance-dm-cache

Please note: This can be used on top of Red Hat VDO depuplication to increase VDO performance

No comments:

Post a Comment